Today we’ll see “What are the different types of Machine Learning Algorithms, Neural Networks, and other parts of Artificial Intelligence and Machine Learning used in solving some real-world problems in tech giants”. We’ll see how 12 big companies are using machine learning in their products.

Table Of Contents 👉

- 12 Companies Using Machine Learning in Cool Ways

- How Quora Uses Machine Learning?

- How Twitter Uses Machine Learning?

- How Apple Uses Machine Learning?

- How eBay Uses Machine Learning?

- How Snapchat Uses Machine Learning?

- How DeepMind Uses Machine Learning?

- How Google Translate Uses Machine Learning?

- How Netflix Uses Machine Learning?

- How Facebook Uses Machine Learning?

- How HSBC Uses Machine Learning?

- How Google Uses Machine Learning?

- How Uber Uses Machine Learning?

- Frequently Asked Questions:

12 Companies Using Machine Learning in Cool Ways

Starting with Quora…

How Quora Uses Machine Learning?

For Ranking Answers [Quora] – Quora uses linear regression, logistic regression, random forests, gradient-boosted trees, and neural networks.

In particular, gradient-boosted trees and some deep-learning approaches. They also use other factors like a number of upvotes, the previous answers written by the author, etc.

To Store Data: Quora uses MySQL to store critical data such as questions, answers, upvotes, and comments. Data size stored in MySQL is of the order of tens of TB without counting replicas.

Our queries per second are in the order of hundreds of thousands. As an aside, we also store a lot of other data in HBase.

Over the years, MySQL usage at Quora has grown in multiple dimensions including the number of tables, table sizes, read QPS (queries per second), write QPS, etc. In order to improve performance, we implemented caching using Memcache as well as Redis.

To combine questions: The company uses Natural Language processing to combine questions with exact same wording/almost similar intended meaning. You can see it’s efficiency by observing so many similar questions out there.

Other Platforms and Tools: According to a software engineer at Quora, these are the tools and platforms quora uses to build containers, export logs, cluster building, etc

- Amazon EKS helped them to get off the ground quickly without having to worry about etc

- Terraform enables them to manage EKS clusters and associated AWS resources using code

- They use Skaffold as a common tool for local development and deployment to production

- BuildKit helps them with smart and concurrent in-cluster builds

- Prometheus helped them to perform well in collecting and providing time series metrics

(Source: Engineering at Quora)

How Twitter Uses Machine Learning?

Machine Learning at Twitter: At Twitter, we use machine learning (ML) models in many applications, from ad selection, to abuse detection, to content recommendations and beyond.

For example, Every second on Twitter, ML models are performing tens of millions of predictions to better understand user engagement and ad relevance.

In a social network like Twitter, when a person joins the platform, a new node is created. When they follow another person, a following edge is created. When they change their profile, the node is updated.

This stream of events is taken by an encoder neural network that produces a time-dependent embedding for each node of the graph.

The embedding can then be fed into a decoder that is designed for a specific task like predicting future interactions by trying to answer various questions.

(Source: Twitter Engineering Blog)

How Apple Uses Machine Learning?

Speech Recognition [Apple’s Siri] – Siri was using multivariate gaussian mixture models (GMMs) till 2011, replaced by hidden Markov Models (HMMs) till 2014, and updated with Long Short Term Memory Networks from 2014 till now.

“Hey Siri” detector uses a Deep Neural Network (DNN) to convert the acoustic pattern of your voice at each instant into a probability distribution over speech sounds.

The giant unicorn has introduced a scale-invariant convolution layer and used it as the main component of its tempo-invariant neural network architecture for downbeat tracking. This helps company to achieve higher accuracy with lower capacity compared to a standard CNN.

A few months ago, Apple presented a meta-learning framework under which the labels are treated as learnable parameters, and are optimized along with model parameters.

The learned labels take into account the model state, and provide dynamic regularization, thereby improving generalization.

They considered two categories of soft labels, class labels specific to each class, and instance labels specific to each instance.

In case of supervised learning, training with dynamically learned labels leads to improvements across different datasets and architectures.

In the presence of noisy annotations in the dataset, their framework corrects annotation errors and improves over the state-of-the-art.

(Source: Apple Machine Learning)

How eBay Uses Machine Learning?

Reverse Image Search and Category Detection [eBay] – eBay Shop Bot uses reverse image search to find products by a user-uploaded photo. To find the category of that product, the company uses ResNet-50 Convolutional Neural Network

eBay is leading the industry by applying automatic machine translation to commerce. When buyers search in these countries, eBay translates their request, and responds with relevant translated inventory from other countries in other languages.

ML at eBay: eBay implements machine learning techniques for item-to-product matching, price prediction, and item categorization tasks, Kopru, A research scientist at eBay.

They also employ them for attribute extraction, generating the proper names of browse nodes, filtering product reviews, and more. Machine learning helps them optimize the relevance of shoppers’ search and navigation experiences.”

eBay’s Best Match: The largest scale application of machine learning technology at eBay is currently Best Match, the algorithm used to optimize relevance for buyers during their shopping experiences.

Best Match analyzes everything from item popularity to potential value to the buyer, to terms of service such as return policies.

eBay deploys AI in various areas, from structured data to machine translation to risk and fraud management.

(Source: Wikipedia and eBay Inc Blog)

Recommended Posts:

- How To Build A Recommendation System Like Uber, Netflix, Spotify, Amazon, Twitter, etc? To Learn, Check Out This Guide: System Design And Recommendation Algorithm Of 20 Big Companies

- Short Quotes, Expert Opinions, And Best Thoughts About AI, ML, Big Data, And Data Science: 100+ Best Quotes On Machine Learning, AI And Data Science

- Take A Look At This Collection Of 10 Roadmaps: Roadmaps For Artificial Intelligence, Machine Learning, Data Science Web Development & App Development

How Snapchat Uses Machine Learning?

For Face Detection and Filters [Snapchat] – In a research paper, published on Arxiv, the company seems to detail one of its tricks for compressing crucial image recognition AI while still maintaining acceptable performance.

Ads Recommendation: Exactly what stories end up at the top of your newsfeed depends a lot on how you use Snapchat. And with every swipe and tap, the A.I. learns a little more. Snap then turns that data into something it can sell to people who make Snap stories, who want to make sure the effort they put into making stories turns into clicks.

“Search surfaces interesting Stories created by machine learning and allows our community to find Stories for anything they might be interested in,” Spiegel said, CEO of Snap.

Along with that, Snap’s algorithm pays attention to whether you have a preference for certain published stories and then starts to prefer to show you those publishers.

(Source: Forbes, Inverse, and Quartz)

How DeepMind Uses Machine Learning?

The algorithm used in AlphaGo – AlphaGo combines machine learning and advanced tree search with deep neural networks.

These neural networks take a description of the Go board as an input and process it through 12 different network layers containing millions of neuron-like connections. One neural network, the “policy network,” selects the next move to play.

The other neural network, the “value network,” predicts the winner of the game. So, this is about Alpha Go. Let’s talk about AlphaGo Zero (A version of AlphaGo Zero).

AlphaGo Zero: AlphaGo Zero’s neural network was trained using TensorFlow, with 64 GPU workers and 19 CPU parameter servers.

Only four TPUs were used for inference. The system starts off with a neural network that knows nothing about the game of Go.

It then plays games against itself, by combining this neural network with a powerful search algorithm. As it plays, the neural network will be active and it start predicting the next moves as well as the eventual winner of the game.

Hey wait, Another version is also their (AlphaGo Zero) but it’s similar to this one but with more powerful training and it’s the most advanced version yet)

(Source: Deepmind Blog, Google Blog, Wikipedia)

How Google Translate Uses Machine Learning?

Machine Translation [Google Translator] – Till 2016, The deep-learning approach of Google’s neural machine translation relies on a type of software algorithm known as a recurrent neural network.

In a recently published blog (in June 2020), Advances in machine learning (ML) have driven improvements to automated translation, including the GNMT neural translation model introduced in Translate in 2016, that have enabled great improvements to the quality of translation for over 100 languages.

(Source: Google AI Blog)

How Netflix Uses Machine Learning?

User Rating Prediction & ODE [Netflix] – Restricted Boltzmann Machines (RBMs) were used in Netflix to improve the prediction of user ratings for movies based on collaborative filtering.

We’re also using machine learning to help shape our catalog of movies and TV shows by learning characteristics that make content successful.

We use it to optimize the production of original movies and TV shows in Netflix’s rapidly growing studio. Machine learning also enables us to optimize video and audio encoding, adaptive bitrate selection, and our in-house Content Delivery Network that accounts for more than a third of North American internet traffic.

It also powers our advertising spend, channel mix, and advertising creative so that we can find new members who will enjoy Netflix.

In addition, they also use different techniques and models like causal modeling, bandits, reinforcement learning, ensembles, neural networks, probabilistic graphical models, and matrix factorization

(Source: Netflix Tech Blog and Netflix Research)

How Facebook Uses Machine Learning?

Detection and Interpretation [Facebook] – Facebook uses DeepText, a text understanding engine, to automatically understand and interpret the content and emotional sentiment of the thousands of posts (in multiple languages) that its users publish every second.

Tulloch, An AI researcher at Facebook says that the company uses an NLP system built around neural networks to identify posts that are excessively promotional, spam, or clickbait.

The deep learning model filters these types of posts out and keeps them from showing in users’ news feeds. Outside of the news feed, deep learning models are helping Facebook develop products by enabling developers to understand content at a large scale.

(Source: Facebook AI Blog, Forbes and TechTarget)

How HSBC Uses Machine Learning?

Customer Behavior & Loan Process [HSBC] – HSBC is just one bank using Artificial Neural Networks to transform how loan and mortgage applications are processed. The company uses neural networks to analyse customers with previous behavior patterns.

HSBC recently began utilizing artificial intelligence (AI) from Quantexa (a big data startup acquired by HSBC), to track and tackle money laundering.

The company uses Artificial Intelligence in the form of a chatbot (Amy) to answer customer queries, to speed up services, which will save firms a great deal of money in the long run.

(Source: Algorithm X Lab)

How Google Uses Machine Learning?

Google – The search engine that is powered by AI [Google] – According to Wired’s Cade Metz; Google’s search engine was always driven by algorithms that automatically generate a response to each query.

But these algorithms amounted to a set of definite rules. Google engineers could readily change and refine these rules. And unlike neural nets, these algorithms didn’t learn on their own.

But now, Google has incorporated deep learning into its search engine. And with its head of AI taking over the search, the company seems to believe this is the way forward.

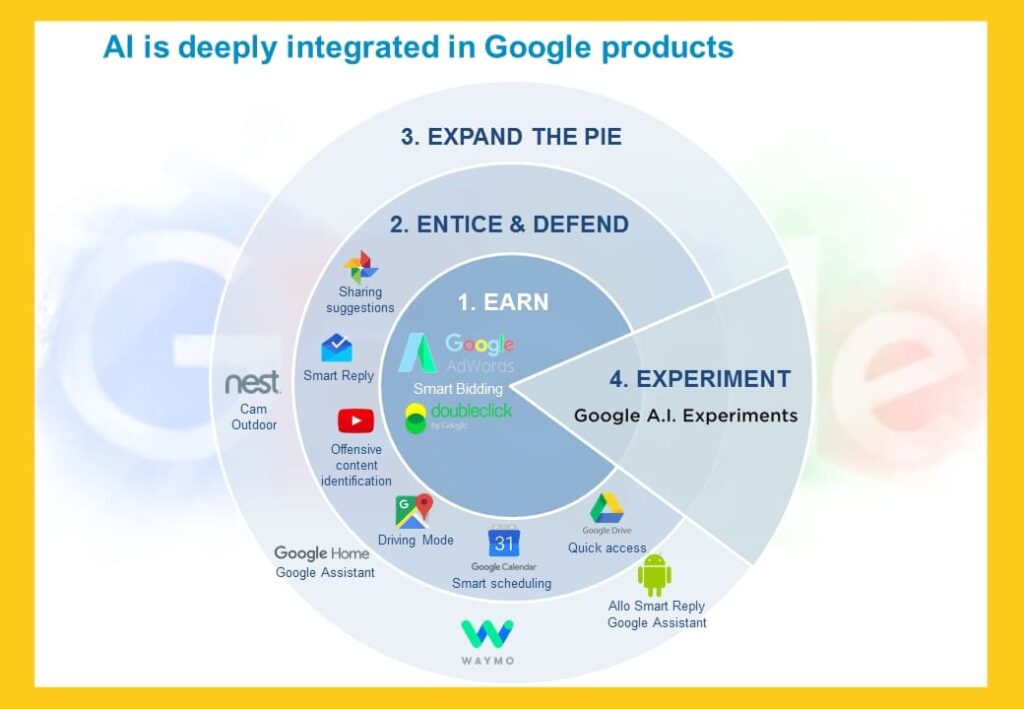

Just take a look at the below given image. It shows how deeply AI in integrated into most of the Google products.

ML in Google Products: Google Ads and Doubleclick both incorporate Smart Bidding which is a machine learning powered automated bidding system.

Google Chrome uses AI to present short and highly related parts of a video while searching for something in Google Search.

It also uses artificial intelligence to analyze the images on a website and plays an audio description or the alt text (when available) for people who are blind or have low vision.

(Source: Wired Magazine and Google Blog)

How Uber Uses Machine Learning?

CoordConv – A New CNN [Uber] – Uber uses the CoordConv layer in many domains that involve coordinate transforms, from designing self-driving vehicles to automating street sign detection to building maps and maximizing the efficiency of spatial movements in the Uber Marketplace.

Ludwig and Uber’s project: Uber uses Ludwig (a code-free deep learning toolbox) in various projects, including Customer Obsession Ticket Assistant (COTA) to reduce ticket resolution time, information extraction from driver licenses, identification of points of interest during conversations between driver-partners and riders, food delivery time prediction, and much more.

Uber’s One-click chat – OCC is only one out of a multitude of different NLP / Conversational AI initiatives at Uber. One-click chat (OCC) leverages Uber’s machine learning platform, Michelangelo, to perform NLP on rider chat messages, and generate appropriate responses.

PRDS inside Uber – Uber uses Plato Research Dialogue System (PRDS) to create, train, and evaluate conversational AI agents in various environments.

It supports interactions through speech, text, or dialogue acts and each conversational agent can interact with data, human users, or other conversational agents.

(Source: Engineering at Uber)

Note: We’ll add more companies like Neuralink, Reddit, Tesla, Tata, Alibaba, IBM, Nvidia, Microsoft, Nasa, Spotify, Starbucks, Salesforce, Amazon and other big companies and organizations very soon.

If you have any valuable info regarding any big AI and ML companies and want us to add anything to this article, please share it with us on any of our social media accounts (@TheInsaneApp). Your feedback matters to us!

Frequently Asked Questions:

Which Companies Use Machine Learning Most?

Here are some examples of big companies that uses machine learning most: Yelp, Pinterest, Facebook, Google, Apple, Uber, Amazon, Microsoft, Baidu, IBM, HubSpot, Twitter, Salesforce, Netflix, Intel, Wells Fargo, Peoplise, etc.

Does Starbucks Use Machine Learning?

Yes, Starbucks uses Machine Learning. Starbucks utilizes the machine-learning (ML) to suggest appropriate products to their customers and enhance their business functions.

Does NASA Use Machine Learning?

Yes, NASA uses Machine Learning. Some of the examples where NASA used ML are Self-Driving Rovers on Mars – The Spirit and Opportunity Rovers, Finding Other Planets in the Universe – Planetary Spectrum Generator, Robotic Astronaut (Robonaut), Navigation on the Moon – Deep Learning Planetary Navigation, etc.

Does SpaceX Use Artificial Intelligence?

Yes, SpaceX Heavily Uses Machine Leaning. Example: SpaceX utilizes an AI-powered autopilot system which helps rockets move between the launch and the docking station of the ISS.

SpaceX’s AI system tracks reserves and fuel usage, Parabolic flight and weather conditions, liquid engine sloshing, and other variables that impact rocket flight.

Does Amazon Use Machine Learning?

Yes, Amazon Heavily Uses Machine Leaning. Some of the examples where Amazon used ML are Alexa Engine, Alexa Smart Home Devices, Amazon Visual Search, Amazon Shopping App, Amazon AWS, Amazon Prime, Etc.

How Many Companies Are Using Machine Learning?

There are thousands of companies using machine learning and artificial intelligence. Top 100 companies that use AI and ML are given here.