On Tuesday, OpenAI announced the latest version of its primary large language model, GPT-4, claiming it will beat 90% of you in multiple exams. The newer version of the ChatGPT-4 model is “larger” than its predecessors.

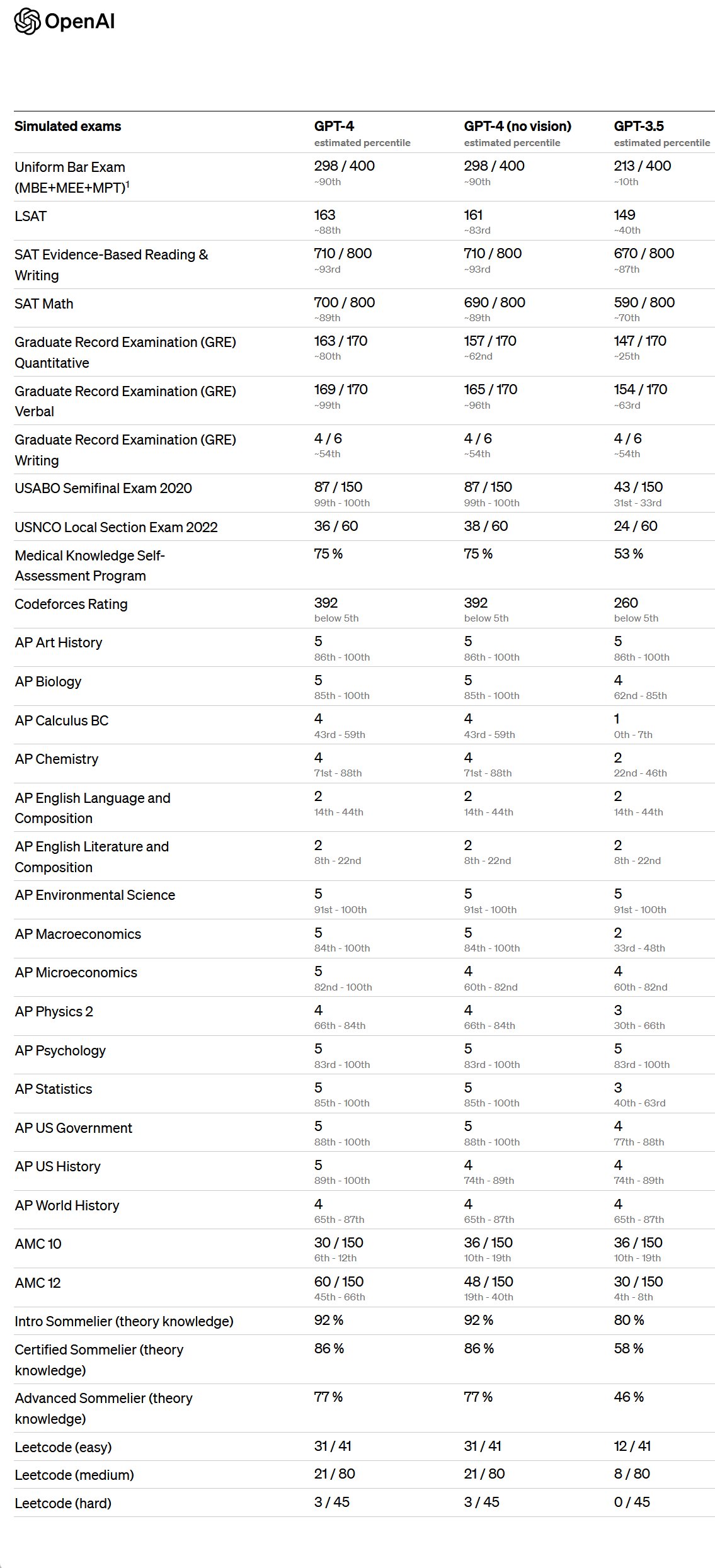

OpenAI claims that GPT-4 scored at the 90th percentile on a simulated bar exam, 93rd percentile on an SAT reading exam, and 89th percentile on the SAT Math exam.

Despite its remarkable performance, OpenAI cautions that the new software is not flawless yet and is inferior to humans in many scenarios. The software still struggles with “hallucination,” which refers to the creation of false information and is not entirely trustworthy in terms of facts, as it can be headstrong even when wrong.

In a blog post, the company stated that GPT-4 still has many known limitations, including social biases, hallucinations, and adversarial prompts, which it is working to address.

According to OpenAI, the difference between GPT-3.5 and GPT-4 might not be noticeable in a casual conversation. However, when the task becomes more complex, GPT-4 surpasses GPT-3.5 in terms of reliability, creativity, and the ability to handle more detailed instructions.

The new model will be accessible to ChatGPT subscribers who pay a fee, and it will also be available through an API that allows developers to incorporate AI into their applications. OpenAI plans to charge approximately 3 cents for approximately 750 prompts and 6 cents for approximately 750 response words.

Related Stories: