In a groundbreaking study published in Nature Neuroscience, scientists from the University of Texas at Austin used a Generative Pre-trained Transformer (GPT) AI model, similar to ChatGPT, to decode human thoughts with upto 82% accuracy rate from functional MRI (fMRI) recordings.

The team believes that this unprecedented level of accuracy in interpreting human thoughts from non-invasive signals will open up numerous scientific opportunities and potential applications.

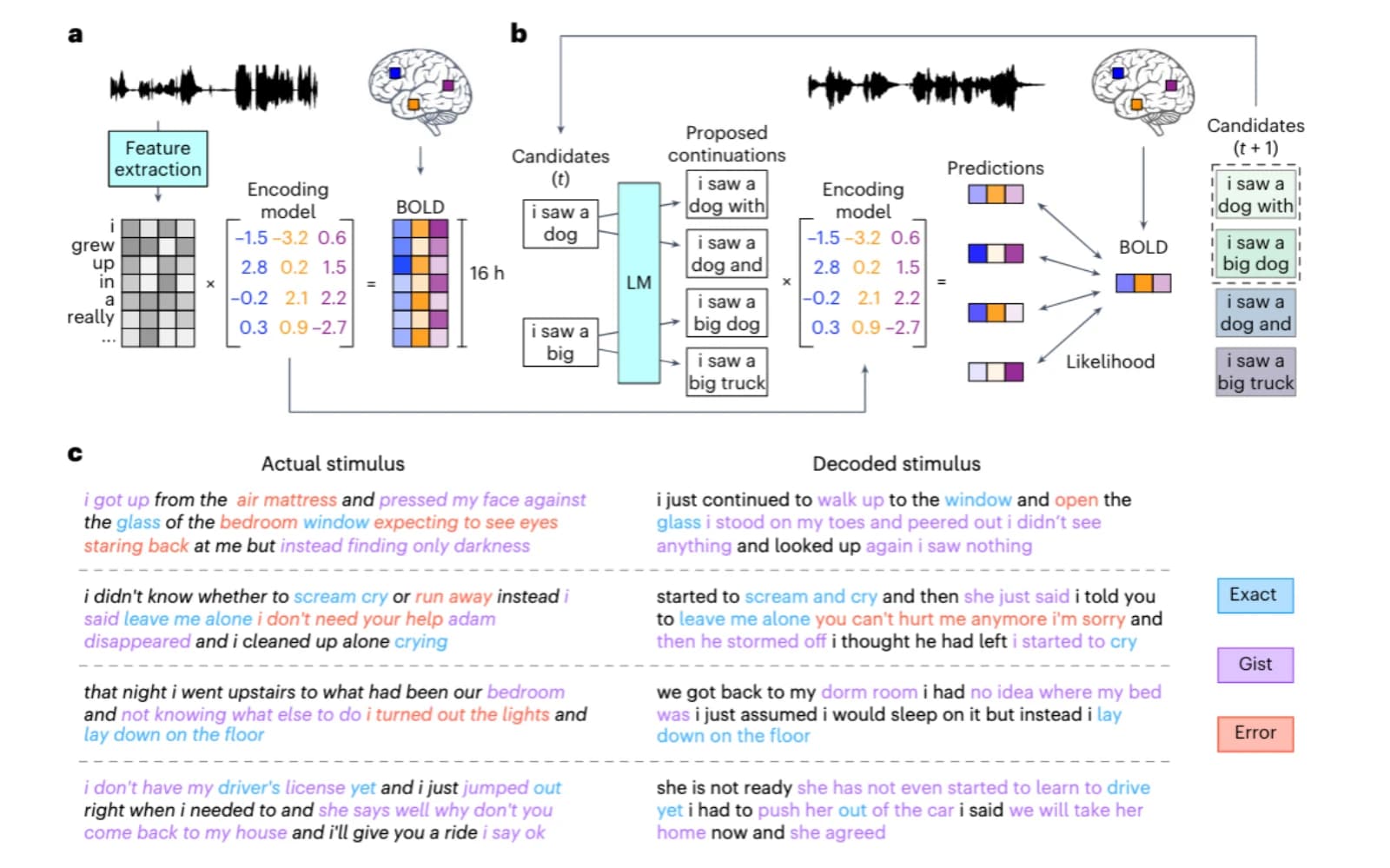

The researchers used fMRI to record 16 hours of brain activity from three individuals while they listened to stories. The recordings were analyzed to identify the neural signals corresponding to individual words.

Deciphering words from non-invasive recordings has been difficult due to fMRI’s low temporal resolution and high spatial resolution. Even though fMRI images are of excellent quality, the recordings can catch the merged signals of nearly 20 English words spoken at a typical pace because a single thought may stay in the brain’s signals for up to 10 sec.

Scientists faced an almost impossible challenge in decoding human thoughts before GPT Large Language Models (LLMs) emerged. Non-invasive methods could only recognize a limited number of specific words a person was thinking.

However, the researchers developed a powerful tool for continuous decoding by employing a custom-trained GPT LLM. This is especially useful as there are significantly more words to decode than brain images available, which is where the LLM has a significant advantage.

With remarkable accuracy, the GPT model generated intelligible word sequences from perceived speech, imagined speech, and even silent videos:

- Perceived speech (subjects listened to a recording): decoding accuracy of 72–82%

- Imagined speech (subjects mentally narrated a one-minute story): accuracy of 41–74%

- Silent movies (subjects viewed soundless Pixar movie clips): 21–45% accuracy in decoding the subject’s interpretation of the movie.

The AI model was able to interpret both the meaning of stimuli and specific words that the subjects were thinking, including phrases like “lay down on the floor,” “leave me alone,” and “scream and cry.”

To address concerns about mental privacy, the research team conducted an additional study where they used decoders trained on data from other subjects to decode the thoughts of new subjects, highlighting the potential risks of decoding human thoughts.

The researchers discovered that “decoders trained on cross-subject data performed barely above chance,” highlighting the significance of using a subject’s own brain recordings for accurate AI model training. Furthermore, subjects could resist decoding efforts by utilizing techniques such as counting to seven, listing farm animals, or narrating a completely different story, all of which significantly decreased decoding accuracy.

However, the researchers recognize that future decoders may find ways to overcome these limitations, and if the decoder predictions are inaccurate, they could be used for malicious purposes, similar to lie detectors.

As a result, a new era is emerging, and “for these and other unanticipated reasons,” the researchers have concluded, “it is crucial to raise awareness of the dangers of brain decoding technology and implement policies that safeguard each individual’s mental privacy.”

Related Stories: