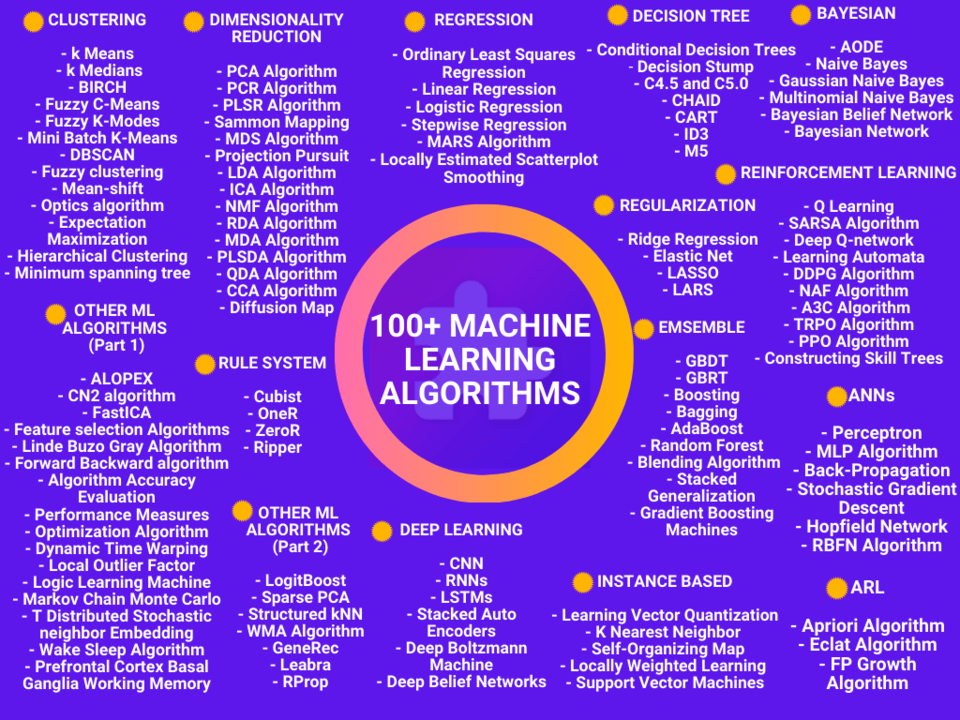

Hey everyone, In this post, we want to share something that can be very useful to you. We’ve created an infographic of 100+ Machine Learning Algorithms in One Pic.

This guide will cover two parts, the first one is 100+ machine learning algorithms mind map in one pic & the second one is “all the types of machine learning algorithms you should know in 2024.

Table Of Contents 👉

- Machine Learning Algorithms PDF

- Types Of Machine Learning

- Types Of Machine Learning Algorithms

- Regression Algorithms

- Deep Learning Algorithms

- Bayesian Algorithms

- Neural Network Algorithms or ANN Algorithms

- Instance-Based Algorithms

- Regularization Algorithms

- Decision Tree Algorithms

- Clustering Algorithms

- Dimensionality Reduction Algorithms

- Ensemble Algorithms

- Association Rule Learning Algorithms

- Rule Based System

- Reinforcement Learning Algorithms

- Other Machine Learning Algorithms

- Frequently Asked Questions:

Machine Learning Algorithms PDF

High-Quality PNG, as well as the PDF version of Machine Learning Algorithms, is given below

Download HQ PNG: 100+ Machine Learning Algorithms

Download PDF: 100+ Machine Learning Algorithms PDF

Types Of Machine Learning

Machine learning Approaches and algorithms are generally classified into four broad categories: Supervised Learning, Unsupervised Learning, Semi-Supervised Learning and Reinforcement Learning

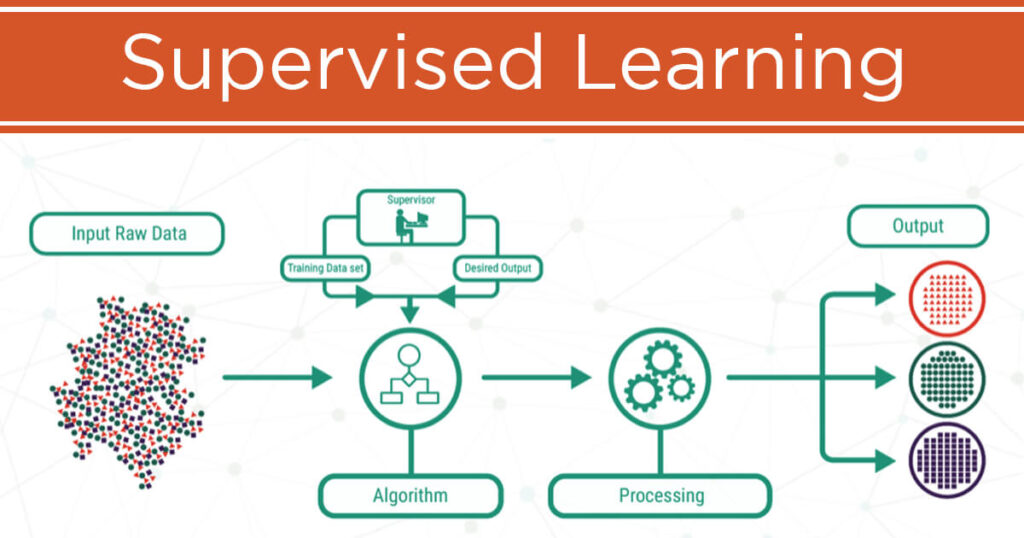

Supervised Learning

Supervised learning is a type of ML where the model is provided with labeled input data and the expected output results.

The AI system is specifically told what to look for, thus the model is trained until it can detect the underlying patterns and relationships, enabling it to yield good results when presented with never-before-seen data.

Supervised Machine Learning Example

For example, every shoe is labeled as a shoe, and the same for the socks so that the system knows the labels, and when subjected to a new type of shoe, it will identify it as ‘shoes’ without being explicitly programmed to do so.

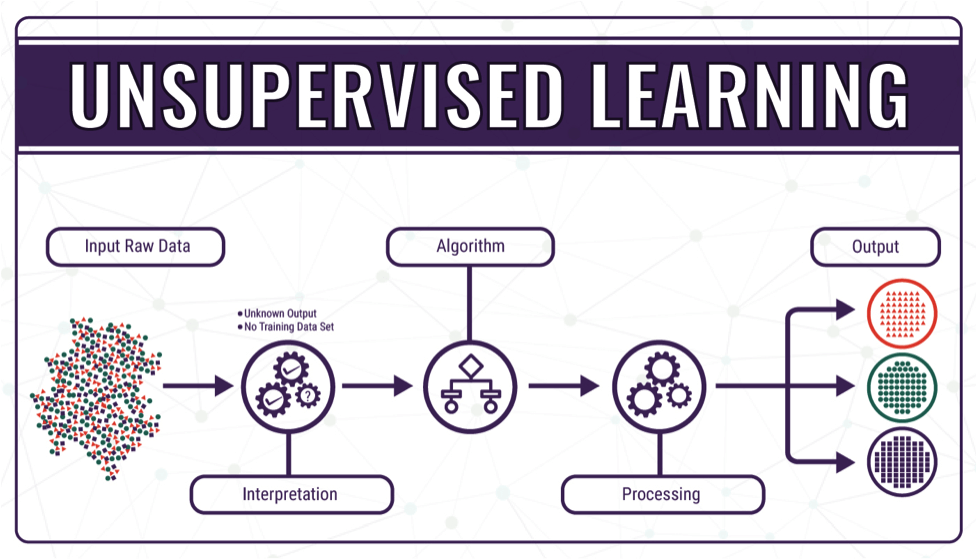

Unsupervised Learning

No labels are given to the learning algorithm, leaving it on its own to find structure in its input. It’s like learning on their own partially similar to humans.

In unsupervised learning, the goal is to identify meaningful patterns in the data. To accomplish this, the machine must learn from an unlabeled data set.

In other words, the model has no hints how to categorize each piece of data and must infer its own rules for doing so.

Unsupervised Machine Learning Example

Taking the same example that was discussed in supervised learning, here, the labels of socks and shoes will be unknown to the interpreter, but the system will know that both are of different categories and place them accordingly.

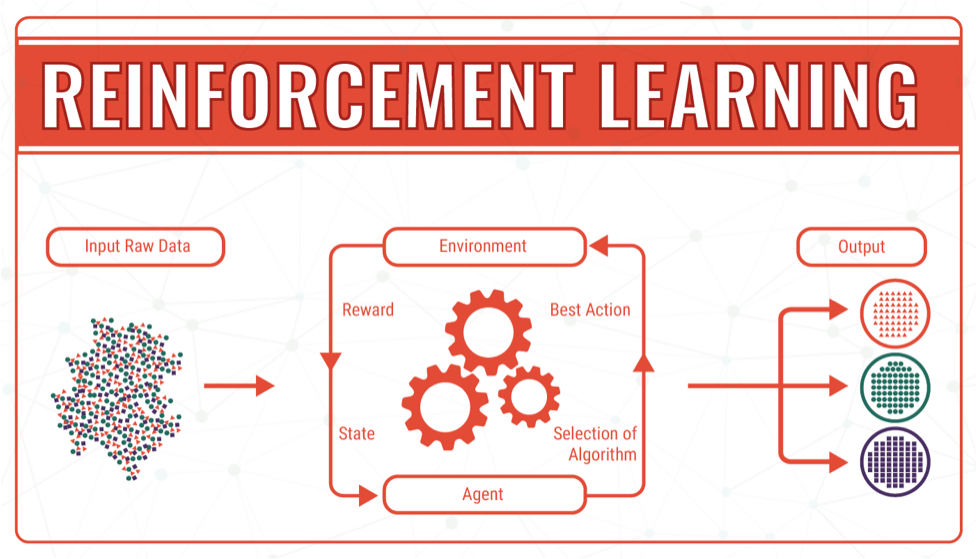

Reinforcement Learning

Reinforcement learning differs from other types of machine learning. In RL, A computer program interacts with a dynamic environment in which it must perform a certain goal (such as driving a vehicle or playing a game against an opponent).

During training, the agent receives a reward when it performs this task, which is called a reward function. With reinforcement learning, the agent can learn very quickly how to outperform humans.

Reinforcement Learning Example

For Example, Consider the scenario of teaching new tricks to your pet (Let’s consider it as your dog)

As a dog doesn’t understand English or any other human language, we can’t tell him directly what to do. Instead, we follow a different strategy.

We emulate a situation, and the dog tries to respond to that situation in various ways. If the dog’s response is the desired way, we will give him his tasty food.

Now whenever the dog is exposed to that same situation, the dog executes a similar action even more enthusiastically in expectation of getting more reward (food). This is one of the best examples of reinforcement learning

Semi Supervised Learning

Semi-supervised learning is a type of machine learning in which the algorithm is trained upon a combination of both labeled as well as unlabeled data during the training.

It falls between unsupervised learning (with no labeled training data) and supervised learning (with only labeled training data).

Semi Supervised Learning Example

For example, Imagine you want to classify content available on the internet like Google does, then labeling each webpage is an almost impossible process and thus you need to use Semi-Supervised learning algorithms and other methods to do so.

According to one blog published by Google AI, they have mentioned that Google search algorithm uses a variant of Semi-Supervised learning to rank the relevance of a webpage for a given query.

Now, it’s time to take a little deep dive into the types of machine learning algorithms. Let’s begin with Regression.

Recommended Stories:

- Want To Learn About Machine Learning Algorithms With Python? If Yes, Then You Must Check Out This Post: 10 Popular Machine Learning Algorithms In Python – A Beginners Guide

- Want To Learn Machine Learning In An Interesting Way? If Yes, Then Check Out This Rarely Seen Free Resource: 35 Best Free Resources To Learn Machine Learning

Types Of Machine Learning Algorithms

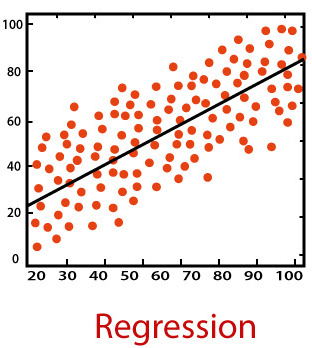

Regression Algorithms

Regression is one of the most important and broadly used machine learning and statistics method out there.

It allows you to make predictions from data by learning the relationship between features of your data and some observed, continuous-valued responses.

Regression is used in a massive number of applications ranging from predicting stock prices to understanding gene regulatory networks.

The most widely used regression algorithms are:

- Linear Regression

- Logistic Regression

- Stepwise Regression

- Ordinary Least Squares Regression (OLSR)

- Multivariate Adaptive Regression Splines (MARS)

- Locally Estimated Scatterplot Smoothing (LOESS)

Deep Learning Algorithms

According to Peter Norvig, the Director of Research at Google & the author of one of the best AI Books, “Artificial Intelligence: A Modern Approach“, Deep Learning is a kind of learning where the representation you form has several levels of abstraction, rather than a direct input to output.

In short, Deep learning models teach computers to do what comes naturally to humans i.e. learn by example.

Deep learning methods are the main power behind driverless cars, enabling them to recognize a stop sign, or to distinguish a pedestrian from a lamppost.

It is the key to voice control in consumer devices like phones, tablets, TVs, and hands-free speakers.

Taking Deep Learning Algorithms into consideration, Here’s the list of the most popular Deep Learning Algorithms you should know as a Machine Learning or Data Science Enthusiast

- Convolutional Neural Network (CNN)

- Recurrent Neural Networks (RNNs)

- Long Short-Term Memory Networks (LSTMs)

- Stacked Auto-Encoders

- Deep Boltzmann Machine (DBM)

- Deep Belief Networks (DBN)

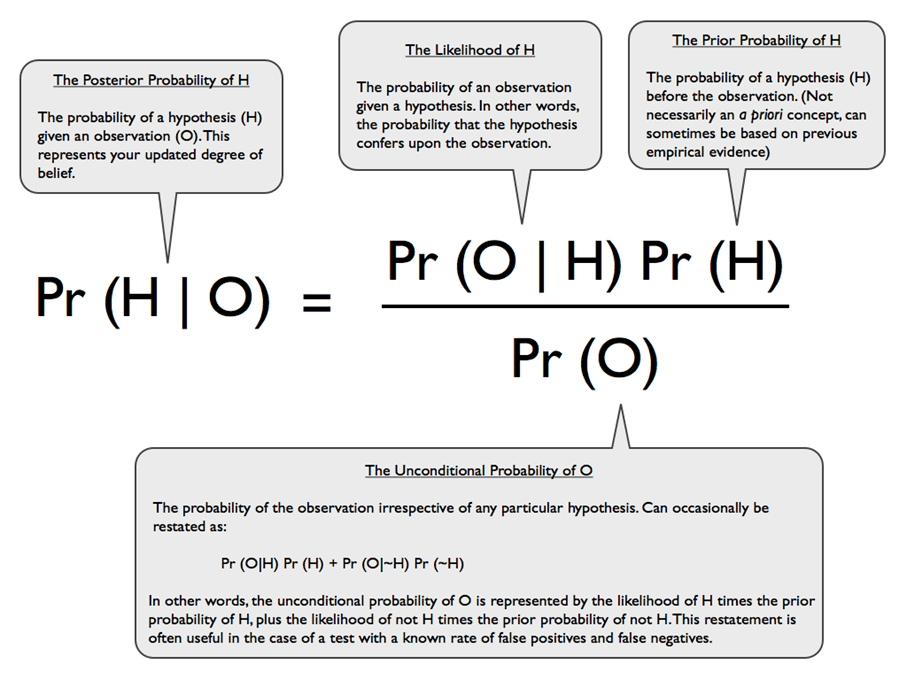

Bayesian Algorithms

A family of algorithms where all of them share a common principle, i.e. every pair of features being classified is independent of each other.

Bayesian machine learning algorithms are based on Bayes theorem which is nothing but calculation of the probability of something happening knowing something else has happened. Moving toward the types of Essential Bayesian Algorithms, here’s the list.

- Naive Bayes

- Gaussian Naive Bayes

- Multinomial Naive Bayes

- Averaged One-Dependence Estimators (AODE)

- Bayesian Belief Network (BBN)

- Bayesian Network (BN)

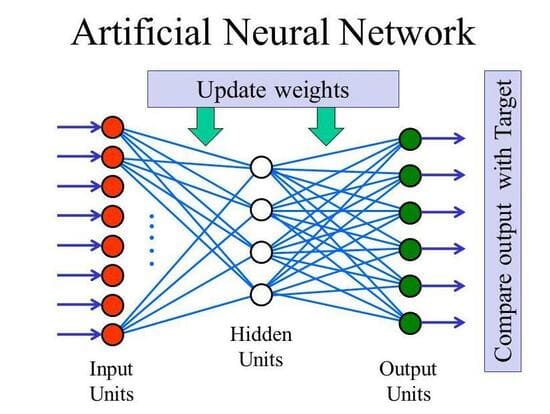

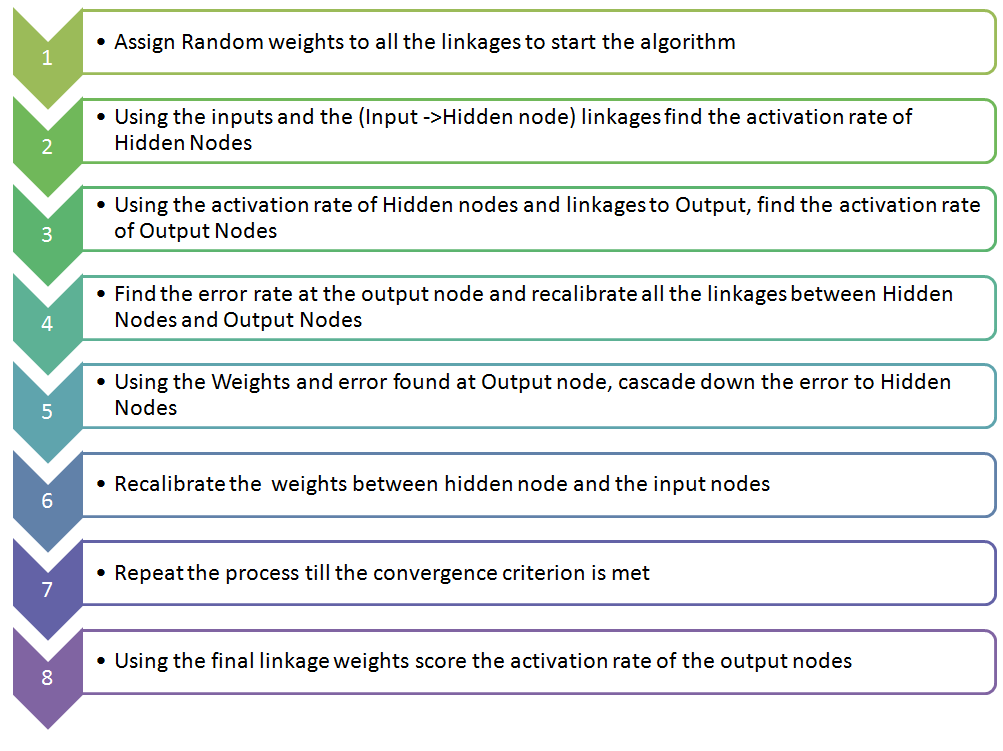

Neural Network Algorithms or ANN Algorithms

Artificial neural networks (ANNs), generally known as neural networks (NNs) are fairly similar to the human brain. They are made up of artificial neurons which take multiple inputs and give out a single output.

Simply, ANNs consist of a layer of input nodes and layer of output nodes, connected by one or more layers of hidden nodes.

Input layer nodes pass information to hidden layer nodes by firing activation functions, and hidden layer nodes fire or remain dormant depending on the evidence presented.

The hidden layers apply weighting functions to the evidence, and when the value of a particular node or set of nodes in the hidden layer reaches some threshold, a value is passed to one or more nodes in the output layer.

The below-given image shows the framework of how an artificial neural network works.

Different types of ANN Algorithms are

- Perceptron

- Multilayer Perceptrons (MLP)

- Back-Propagation

- Stochastic Gradient Descent

- Hopfield Network

- Radial Basis Function Network (RBFN)

Instance-Based Algorithms

This supervised machine learning algorithm performs operations after comparing current instances with previously trained instances that are stored in memory.

This algorithm is called instance-based because it is using instances created using training data. Some of the most popular instance-based algorithms are listed below

- k-Nearest Neighbor (kNN)

- Learning Vector Quantization (LVQ)

- Self-Organizing Map (SOM)

- Locally Weighted Learning (LWL)

- Support Vector Machines (SVM)

Recommended Stories:

- Take A Look At This Collection Of 10 Roadmaps: Roadmaps For AI, ML, Data Science Web Development & App Development

- Are You Looking For Free Machine Learning Courses? If Yes, Then Check Out This Collection Of 100+ Courses From MIT, Stanford, Kaggle, Google, etc: 100+ Best Free Machine Learning And Deep Learning Courses

Regularization Algorithms

Regularization is a technique which makes slight modifications to the learning algorithm such that the model generalizes better. This in turn improves the model’s performance on the unseen data as well.

These techniques or algorithms are used in conjunction with regression or classification algorithms to reduce the effect of over-fitting in data.

Tweaking of these algorithms allows us to find the right balance between training the model well and the way it predicts. The list of most widely used regularization algorithms are

- Ridge Regression

- Least Absolute Shrinkage and Selection Operator (LASSO)

- Elastic Net

- Least-Angle Regression (LARS)

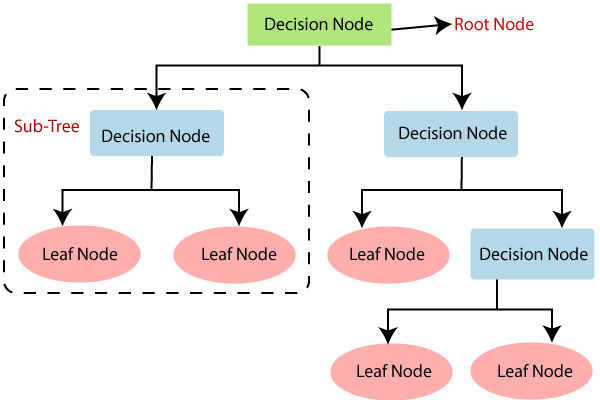

Decision Tree Algorithms

Decision Tree algorithm belongs to the family of supervised learning algorithms. Unlike other supervised learning algorithms, the decision tree algorithm can be used for solving regression and classification problems too.

The goal of using a Decision Tree is to create a training model that can be used to predict the class or value of the target variable by learning simple decision rules inferred from prior data(training data).

In Decision Trees, for predicting a class label for a record we start from the root of the tree. We compare the values of the root attribute with the record’s attribute. On the basis of comparison, we follow the branch corresponding to that value and jump to the next node. The most popular decision tree algorithms are given below

- Chi-squared Automatic Interaction Detection (CHAID)

- Classification and Regression Tree (CART)

- Iterative Dichotomiser 3 (ID3)

- C4.5 and C5.0

- Conditional Decision Trees

- Decision Stump

- M5

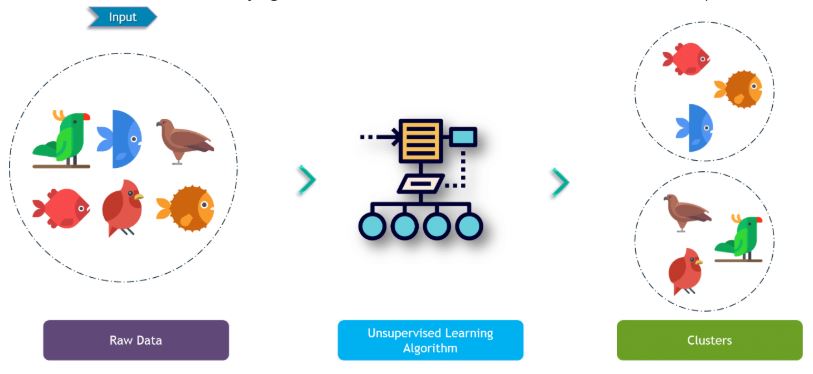

Clustering Algorithms

Making a bunch of similar types of things is called clustering. In ML Terms, Clustering is a machine-learning technique that involves the grouping of data points.

Given a set of data points, we can use a clustering algorithm to classify each data point into a specific group.

- k-Means

- k-Medians

- Expectation Maximization (EM)

- Hierarchical Clustering

- Minimum spanning tree

- BIRCH

- Fuzzy C-Means

- Fuzzy K-Modes

- Mini Batch K-Means

- DBSCAN

- Fuzzy clustering

- Mean-shift

- OPTICS algorithm

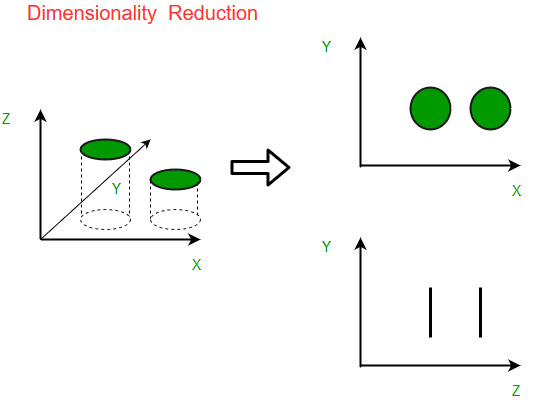

Dimensionality Reduction Algorithms

Dimensionality reduction is an unsupervised learning technique.

This machine learning technique helps us in reducing the dimensions of our data which in turn reduces the over-fitting in our model and reduces high variance on our training set so that we can make better predictions on our test set.

Some of the most popular and widely used dimensionality reduction algorithms are

- Principal Component Analysis (PCA)

- Principal Component Regression (PCR)

- Partial Least Squares Regression (PLSR)

- Sammon Mapping

- Multidimensional Scaling (MDS)

- Projection Pursuit

- Linear Discriminant Analysis (LDA)

- Independent component analysis (ICA)

- Non-negative matrix factorization (NMF)

- Regularized Discriminant Analysis (RDA)

- Mixture Discriminant Analysis (MDA)

- Partial Least Squares Dimension Analysis (PLSDA)

- Quadratic Discriminant Analysis (QDA)

- Canonical correlation analysis (CCA)

- Flexible Discriminant Analysis (FDA)

- Diffusion map

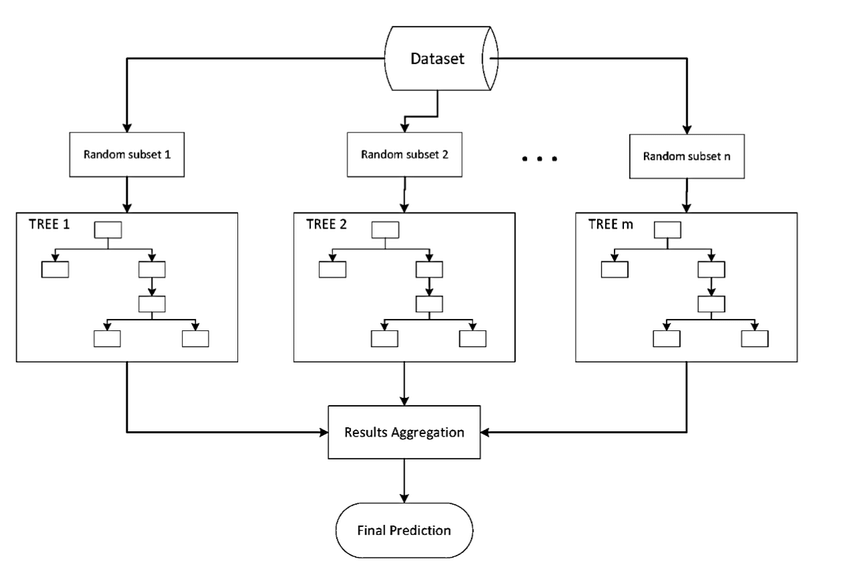

Ensemble Algorithms

Ensemble methods use multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone.

They are usually better than single models as they combine different models to achieve higher accuracy.

If you don’t know about the types of ensemble algorithms, here’s the list that you should know about

- Boosting

- AdaBoost

- Bootstrapped Aggregation (Bagging)

- Weighted Average (Blending)

- Stacked Generalization (Stacking)

- Gradient Boosting Machines (GBM)

- Gradient Boosted Regression Trees (GBRT)

- Random Forest

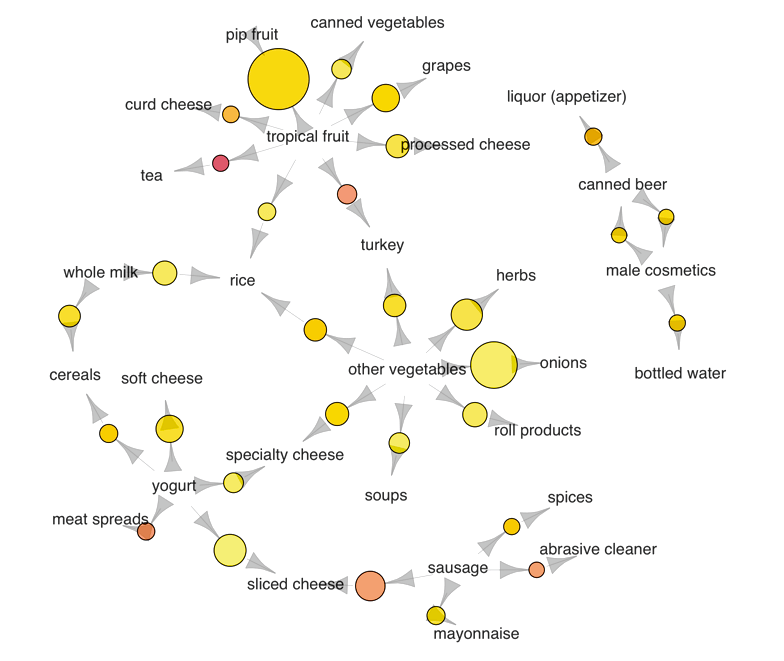

Association Rule Learning Algorithms

Association rule learning is a rule-based machine learning method for discovering interesting relations between variables in large databases.

It is intended to identify strong rules discovered in databases using some measures of interestingness.

Association Rules work on the basis of if/then statements. These statements help to reveal associations between independent data in a database, relational database or other information repositories.

These rules are used to identify the relationships between the objects which are usually used together. There are three association rule learning algorithms namely

- Apriori algorithm

- Eclat algorithm

- FP-growth algorithm

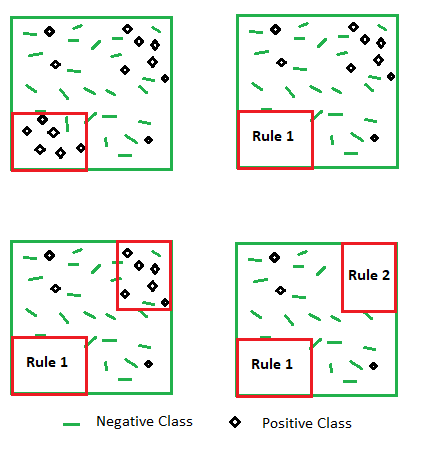

Rule Based System

Rule-based machine learning applies some form of learning algorithm to automatically identify useful rules, rather than a human need to apply prior domain knowledge to manually construct rules and curate a rule set. List of algorithms that come under this list are

- Ripper Algorithm

- Repeated Incremental Pruning to Produce

- Cubist

- OneR

- ZeroR

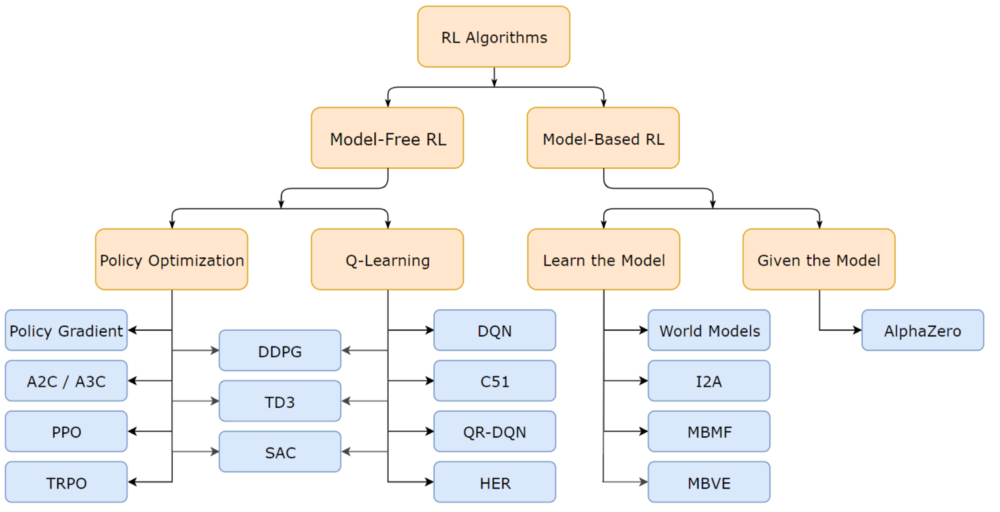

Reinforcement Learning Algorithms

We’ve already discussed reinforcement learning earlier in this post. RL algorithms are generally classified as model-based and model-free algorithms.

In model-based algorithms, we should have a model that would learn from current actions and from state transitions.

After some steps, it would not be feasible, as it would have to store all the state and action data in the memory. Whereas, in model-free algorithms, you do not have to worry about a model that consumes much space.

This algorithm works on a trial-and-error basis, so you don’t need to store the states and actions. Various reinforcement learning algorithms are

- State Action Reward State Action (SARSA)

- Q-learning

- Deep Q-network (DQN)

- Learning Automata

- Deep Deterministic Policy Gradient (DDPG)

- Normalized Advantage Function (NAF)

- Asynchronous Advantage Actor Critic (A3C)

- Trust Region Policy Optimization (TRPO)

- Proximal Policy Optimization (PPO)

- Constructing skill trees

Other Machine Learning Algorithms

- Feature selection algorithms

- Algorithm accuracy evaluation

- Performance measures

- Optimization algorithm

- ALOPEX

- CN2 algorithm

- Dynamic time warping

- FastICA

- Forward-backward algorithm

- GeneRec

- Leabra

- Linde–Buzo–Gray algorithm

- Local outlier factor

- Logic learning machine

- LogitBoost

- Markov chain Monte Carlo

- Prefrontal cortex basal ganglia working memory

- Weighted majority algorithm (WMA)

- Rprop

- Sparse PCA

- Structured kNN

- T distributed stochastic neighbor embedding

- Wake sleep algorithm

So, that is all for today. Thanks to all our readers for your so much love on all our articles.

We’ve tried and will always try our best to provide you with one of the best machine learning, data science, programming, and artificial intelligence articles.

Hope this 100+ Machine Learning Algorithms Mind Map and types of machine learning algorithms guide will be helpful to you. Stay tuned for more!

Frequently Asked Questions:

What Is The Best Machine Learning Algorithm?

Top 6 Best Machine Learning Algorithms in 2024 Are Linear regression, Logistic regression, Decision trees, Support vector machines (SVMs), Naive Bayes algorithm and KNN classification algorithm.

What Are The 3 Types Of Machine Learning?

Three Types of Machine Learning are supervised, unsupervised, and reinforcement learning.

How Many ML Algorithms Are There?

There are 100s of Machine Learning Algorithms on the internet. We have already listed top 100 ML Algorithms in this article.

Which ML Algorithm Should I Learn First?

The first machine learning algorithm that you should learn is obviously depends on many things but mostly people learn linear regression.

How Do You Write An ML Algorithm?

Steps To Write Any Machine Learning Algorithm From Scratch Are

1. Get a basic understanding of the algorithm.

2. Find some different learning sources.

3. Break the algorithm into chunks.

4. Start with a simple example.

5. Validate with a trusted implementation.

6. Write up your process.