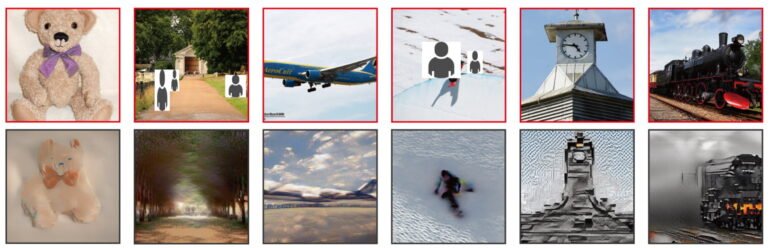

The researchers demonstrate how Stable Diffusion can interpret thoughts. This approach can reconstruct images from fMRI scans with high accuracy.

Researchers have used AI models to decode information from the human brain for years. Most methods use prerecorded fMRI pictures as input to a Generative AI modeling for text or images.

A group of Japanese researchers demonstrated, in early 2018, how a neural network could reconstruct images from fMRI recordings. Subsequently, in 2019, another team accomplished the same feat using monkey neurons. Furthermore, Jean-Remi King’s research group at Meta has released new findings, where they extract text from fMRI data.

In October 2022, a group based in Austin demonstrated that GPT models could deduce text representing the semantic context of video content viewed by an individual using fMRI scans.

Researchers at Stanford University, the Chinese University of Hong Kong, and the National University of Singapore used MinD-Vis in November 2022 to demonstrate how current generative AI models like DALL-E, Midjourney and Stable Diffusion can reconstruct images from fMRI scans at significantly higher accuracy than the available methods.

Table Of Contents 👉

Stable Diffusion Can Reconstruct Brain Images Without Finetuning

Recently, Researchers from Osaka University’s Graduate School of Frontier Biosciences and CiNet, NICT (Japan) used a diffusion model – specifically Stable Diffusion – to reconstruct visual experiences from fMRI data.

By doing this, the team removes the need for training and fine-tuning complex AI models. Basic linear models must be trained, which can map the fMRI signals of the upper and lower visual brain regions and Stable Diffusion components.

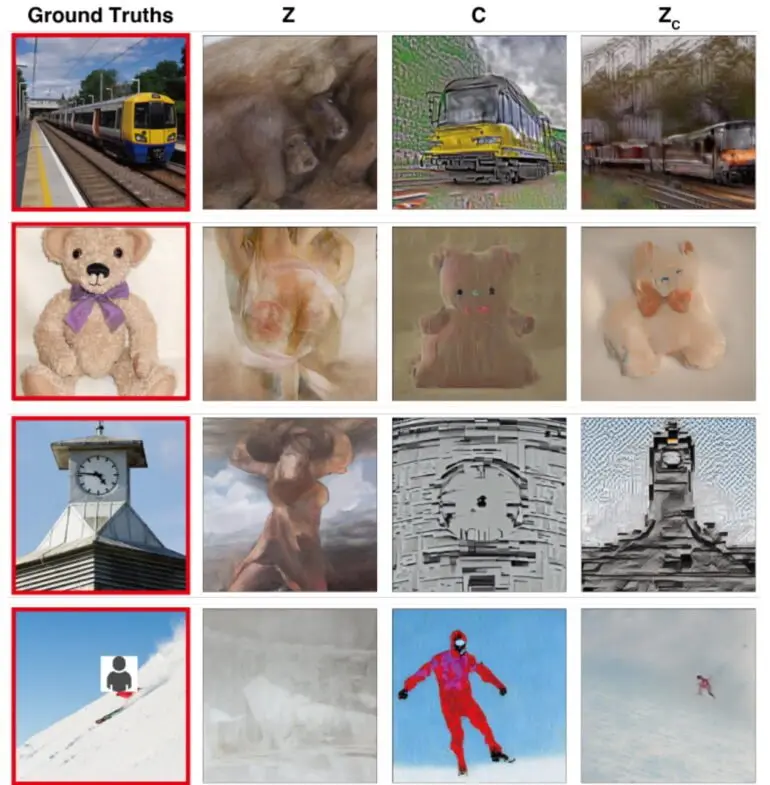

The researchers associate brain regions with image and text encoders, respectively. The image encoder is linked with lower brain regions, while the text encoder is linked with upper brain regions. By doing so, the system can reconstruct using both image composition and semantic content, the researchers explained.

The researchers used fMRI images from the Natural Scenes Dataset (NSD) for their experiment. They tested whether they could use Stable Diffusion to reconstruct what subjects saw.

The combination of image and text decoding results in the most accurate reconstruction. The team points out that there are differences in accuracy among subjects, but these correlate with the quality of the fMRI images.

fMRI Reconstruction Leads To A Better Understanding Of Diffusion Models

The team claims the reconstructions are as good as the best available methods. However, using AI models from other places is possible without training them.

The team uses models derived from fMRI data to study Stable Diffusion’s individual building blocks. This includes how semantic content is created in the inverse diffusion process and what processes occur within the U-Net.

The team is also quantitatively interpreting image transformations at various stages of Diffusion. The researchers hope to improve the understanding of biological diffusion models, which are popular but poorly understood.

Related Stories: